Tiberiu Teșileanu a physicist working in neuroscience

Publications

-

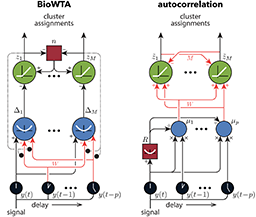

Tesileanu, T., Golkar, S., Nasiri, S., Sengupta, A.M., and Chklovskii, D.B.arXiv 2021

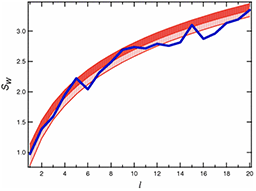

Tesileanu, T., Golkar, S., Nasiri, S., Sengupta, A.M., and Chklovskii, D.B.arXiv 2021The brain must extract behaviorally relevant latent variables from the signals streamed by the sensory organs. Such latent variables are often encoded in the dynamics that generated the signal rather than in the specific realization of the waveform. Therefore, one problem faced by the brain is to segment time series based on underlying dynamics. We present two algorithms for performing this segmentation task that are biologically plausible, which we define as acting in a streaming setting and all learning rules being local. One algorithm is model based and can be derived from an optimization problem involving a mixture of autoregressive processes. This algorithm relies on feedback in the form of a prediction error and can also be used for forecasting future samples. In some brain regions, such as the retina, the feedback connections necessary to use the prediction error for learning are absent. For this case, we propose a second, model-free algorithm that uses a running estimate of the autocorrelation structure of the signal to perform the segmentation. We show that both algorithms do well when tasked with segmenting signals drawn from autoregressive models with piecewise-constant parameters. In particular, the segmentation accuracy is similar to that obtained from oracle-like methods in which the ground-truth parameters of the autoregressive models are known. We also test our methods on data sets generated by alternating snippets of voice recordings. We provide implementations of our algorithms at https://github.com/ttesileanu/bio-time-series.

@article{Tesileanu2021, image = {mypub_imgs_seq_seg.png}, bibtex_show = {true}, selected = {true}, archiveprefix = {arXiv}, arxivid = {2104.11852}, author = {Tesileanu, Tiberiu and Golkar, Siavash and Nasiri, Samaneh and Sengupta, Anirvan M. and Chklovskii, Dmitri B.}, doi = {10.1162/neco_a_01476}, journal = {arXiv}, eprint = {2104.11852}, pages = {1--34}, title = {{Neural circuits for dynamics-based segmentation of time series}}, keywords = {biowta}, url = {http://arxiv.org/abs/2104.11852}, pdf = {https://arxiv.org/pdf/2104.11852.pdf}, year = {2021} } -

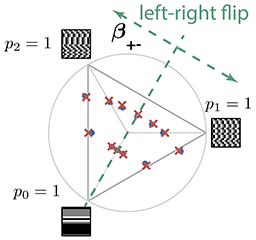

Tesileanu, T., Conte, M.M., Briguglio, J.J., Hermundstad, A.M., Victor, J.D. et al.eLife 2020

Tesileanu, T., Conte, M.M., Briguglio, J.J., Hermundstad, A.M., Victor, J.D. et al.eLife 2020Previously, in (Hermundstad et al., 2014), we showed that when sampling is limiting, the efficient coding principle leads to a “variance is salience” hypothesis, and that this hypothesis accounts for visual sensitivity to binary image statistics. Here, using extensive new psychophysical data and image analysis, we show that this hypothesis accounts for visual sensitivity to a large set of grayscale image statistics at a striking level of detail, and also identify the limits of the prediction. We define a 66-dimensional space of local grayscale light-intensity correlations, and measure the relevance of each direction to natural scenes. The “variance is salience” hypothesis predicts that two-point correlations are most salient, and predicts their relative salience. We tested these predictions in a texture-segregation task using un-natural, synthetic textures. As predicted, correlations beyond second order are not salient, and predicted thresholds for over 300 second-order correlations match psychophysical thresholds closely (median fractional error <0.13).

@article{Tesileanu2020, image = {mypub_imgs_eff_coding_tex.png}, bibtex_show = {true}, author = {Tesileanu, Tiberiu and Conte, Mary M. and Briguglio, John J. and Hermundstad, Ann M. and Victor, Jonathan D. and Balasubramanian, Vijay}, doi = {10.7554/ELIFE.54347}, journal = {eLife}, pages = {1--35}, title = {{Efficient coding of natural scene statistics predicts discrimination thresholds for grayscale textures}}, volume = {9}, year = {2020}, keywords = {viztex}, url = {https://elifesciences.org/articles/54347}, pdf = {https://elifesciences.org/articles/54347.pdf} } -

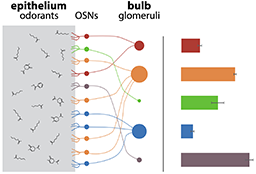

Tesileanu, T., Cocco, S., Monasson, R., and Balasubramanian, V.eLife 2019

Tesileanu, T., Cocco, S., Monasson, R., and Balasubramanian, V.eLife 2019Olfactory receptor usage is highly heterogeneous, with some receptor types being orders of magnitude more abundant than others. We propose an explanation for this striking fact: the receptor distribution is tuned to maximally represent information about the olfactory environment in a regime of efficient coding that is sensitive to the global context of correlated sensor responses. This model predicts that in mammals, where olfactory sensory neurons are replaced regularly, receptor abundances should continuously adapt to odor statistics. Experimentally, increased exposure to odorants leads variously, but reproducibly, to increased, decreased, or unchanged abundances of different activated receptors. We demonstrate that this diversity of effects is required for efficient coding when sensors are broadly correlated, and provide an algorithm for predicting which olfactory receptors should increase or decrease in abundance following specific environmental changes. Finally, we give simple dynamical rules for neural birth and death processes that might underlie this adaptation.

@article{Tesileanu2019, image = {mypub_imgs_eff_coding_smell.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {1801.09300}, author = {Tesileanu, Tiberiu and Cocco, Simona and Monasson, Remi and Balasubramanian, Vijay}, eprint = {1801.09300}, journal = {eLife}, pages = {e39279}, title = {{Adaptation of olfactory receptor abundances for efficient coding}}, volume = {8}, year = {2019}, keywords = {orn}, url = {https://elifesciences.org/articles/39279}, pdf = {https://elifesciences.org/articles/39279.pdf} } -

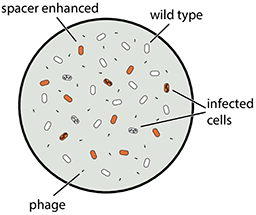

Bradde, S., Vucelja, M., Tesileanu, T., and Balasubramanian, V.PLoS Computational Biology 2017

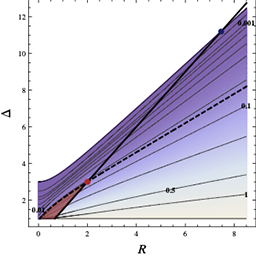

Bradde, S., Vucelja, M., Tesileanu, T., and Balasubramanian, V.PLoS Computational Biology 2017The CRISPR (clustered regularly interspaced short palindromic repeats) mechanism allows bacteria to adaptively defend against phages by acquiring short genomic sequences (spacers) that target specific sequences in the viral genome. We propose a population dynamical model where immunity can be both acquired and lost. The model predicts regimes where bacterial and phage populations can co-exist, others where the populations exhibit damped oscillations, and still others where one population is driven to extinction. Our model considers two key parameters: (1) ease of acquisition and (2) spacer effectiveness in conferring immunity. Analytical calculations and numerical simulations show that if spacers differ mainly in ease of acquisition, or if the probability of acquiring them is sufficiently high, bacteria develop a diverse population of spacers. On the other hand, if spacers differ mainly in their effectiveness, their final distribution will be highly peaked, akin to a “winner-take-all” scenario, leading to a specialized spacer distribution. Bacteria can interpolate between these limiting behaviors by actively tuning their overall acquisition probability.

@article{Bradde2017, image = {mypub_imgs_crispr.png}, bibtex_show = {true}, author = {Bradde, Serena and Vucelja, Marija and Tesileanu, Tiberiu and Balasubramanian, Vijay}, doi = {10.1371/journal.pcbi.1005486}, journal = {PLoS Computational Biology}, number = {4}, title = {{Dynamics of adaptive immunity against phage in bacterial populations}}, volume = {13}, year = {2017}, keywords = {crispr}, url = {https://journals.plos.org/ploscompbiol/article?id=10.1371/journal.pcbi.1005486}, pdf = {https://journals.plos.org/ploscompbiol/article/file?id=10.1371/journal.pcbi.1005486&type=printable} } -

Tesileanu, T., Ölveczky, B., and Balasubramanian, V.eLife 2017

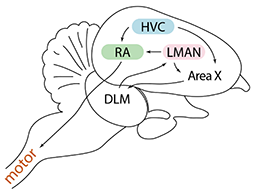

Tesileanu, T., Ölveczky, B., and Balasubramanian, V.eLife 2017Trial-and-error learning requires evaluating variable actions and reinforcing successful variants. In songbirds, vocal exploration is induced by LMAN, the output of a basal ganglia-related circuit that also contributes a corrective bias to the vocal output. This bias is gradually consolidated in RA, a motor cortex analogue downstream of LMAN. We develop a new model of such two-stage learning. Using stochastic gradient descent, we derive how the activity in ‘tutor’ circuits (e.g., LMAN) should match plasticity mechanisms in ‘student’ circuits (e.g., RA) to achieve efficient learning. We further describe a reinforcement learning framework through which the tutor can build its teaching signal. We show that mismatches between the tutor signal and the plasticity mechanism can impair learning. Applied to birdsong, our results predict the temporal structure of the corrective bias from LMAN given a plasticity rule in RA. Our framework can be applied predictively to other paired brain areas showing two-stage learning.

@article{Tesileanu2017, image = {mypub_imgs_two_stage.png}, bibtex_show = {true}, author = {Tesileanu, Tiberiu and {\"{O}}lveczky, Bence and Balasubramanian, Vijay}, doi = {10.7554/eLife.20944}, journal = {eLife}, title = {{Rules and mechanisms for efficient two-stage learning in neural circuits}}, volume = {6}, year = {2017}, keywords = {birdsong}, url = {https://elifesciences.org/articles/20944}, pdf = {https://elifesciences.org/articles/20944.pdf} } -

Tesileanu, T., Colwell, L.J., and Leibler, S.PLOS Computational Biology 2015

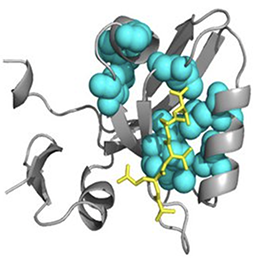

Tesileanu, T., Colwell, L.J., and Leibler, S.PLOS Computational Biology 2015Statistical coupling analysis (SCA) is a method for analyzing multiple sequence alignments that was used to identify groups of coevolving residues termed “sectors”. The method applies spectral analysis to a matrix obtained by combining correlation information with sequence conservation. It has been asserted that the protein sectors identified by SCA are functionally significant, with different sectors controlling different biochemical properties of the protein. Here we reconsider the available experimental data and note that it involves almost exclusively proteins with a single sector. We show that in this case sequence conservation is the dominating factor in SCA, and can alone be used to make statistically equivalent functional predictions. Therefore, we suggest shifting the experimental focus to proteins for which SCA identifies several sectors. Correlations in protein alignments, which have been shown to be informative in a number of independent studies, would then be less dominated by equence conservation.

@article{Tesileanu2015, image = {mypub_imgs_protein_sectors.png}, bibtex_show = {true}, selected = {true}, author = {Tesileanu, Tiberiu and Colwell, Lucy J. and Leibler, Stanislas}, doi = {10.1371/journal.pcbi.1004091}, journal = {PLOS Computational Biology}, number = {2}, pages = {e1004091}, title = {{Protein Sectors: Statistical Coupling Analysis versus Conservation}}, keywords = {protalign}, url = {http://dx.plos.org/10.1371/journal.pcbi.1004091}, pdf = {https://journals.plos.org/ploscompbiol/article/file?id=10.1371/journal.pcbi.1004091&type=printable}, volume = {11}, year = {2015} } -

Melnikov, A., Murugan, A., Zhang, X., Tesileanu, T., Wang, L. et al.Nature Biotechnology Feb 2012

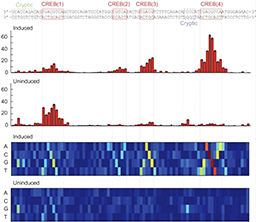

Melnikov, A., Murugan, A., Zhang, X., Tesileanu, T., Wang, L. et al.Nature Biotechnology Feb 2012Learning to read and write the transcriptional regulatory code is of central importance to progress in genetic analysis and engineering. Here we describe a massively parallel reporter assay (MPRA) that facilitates the systematic dissection of transcriptional regulatory elements. In MPRA, microarray-synthesized DNA regulatory elements and unique sequence tags are cloned into plasmids to generate a library of reporter constructs. These constructs are transfected into cells and tag expression is assayed by high-throughput sequencing. We apply MPRA to compare ≥27,000 variants of two inducible enhancers in human cells: a synthetic cAMP-regulated enhancer and the virus-inducible interferon-β enhancer. We first show that the resulting data define accurate maps of functional transcription factor binding sites in both enhancers at single-nucleotide resolution. We then use the data to train quantitative sequence-activity models (QSAMs) of the two enhancers. We show that QSAMs from two cellular states can be combined to design enhancer variants that optimize potentially conflicting objectives, such as maximizing induced activity while minimizing basal activity.

@article{Melnikov2012, image = {mypub_imgs_mpra.png}, bibtex_show = {true}, selected = {true}, author = {Melnikov, Alexandre and Murugan, Anand and Zhang, Xiaolan and Tesileanu, Tiberiu and Wang, Li and Rogov, Peter and Feizi, Soheil and Gnirke, Andreas and Callan, Curtis G. and Kinney, Justin B. and Kellis, Manolis and Lander, Eric S. and Mikkelsen, Tarjei S.}, doi = {10.1038/nbt.2137}, journal = {Nature Biotechnology}, month = feb, number = {3}, pages = {271--277}, pmid = {22371084}, publisher = {Nature Publishing Group, a division of Macmillan Publishers Limited. All Rights Reserved.}, shorttitle = {Nat Biotech}, keywords = {transcription}, title = {{Systematic dissection and optimization of inducible enhancers in human cells using a massively parallel reporter assay}}, url = {https://www.nature.com/articles/nbt.2137}, volume = {30}, year = {2012} } -

Tesileanu, T.PhD thesis, Princeton University Feb 2011

Tesileanu, T.PhD thesis, Princeton University Feb 2011The AdS/CFT duality is an equivalence between string theory and gauge theory. The duality allows one to use calculations done in classical gravity to derive results in strongly-coupled field theories. This thesis explores several applications of the duality that have some relevance to condensed matter physics. In the first of these applications, it is shown that a large class of strongly-coupled (3+1)-dimensional conformal field theories undergo a superfluid phase transition in which a certain chiral primary operator develops a non-zero expectation value at low temperatures. A suggestion is made for the identity of the condensing operator in the field theory. In a different application, the conifold theory, an \(\mathrm {SU}(N) \times \mathrm{SU}(N)\) gauge theory, is studied at nonzero chemical potential for baryon number density. In the low-temperature limit, the near-horizon geometry of the dual supergravity solution becomes a warped product \(\mathrm{AdS}_2 \times \mathbb R^3\times T^{1,1}\), with logarithmic warp factors. This encodes a type of emergent quantum near-criticality in the field theory. A similar construction is analyzed in the context of M theory. This construction is based on branes wrapped around topologically nontrivial cycles of the geometry. Several non-supersymmetric solutions are found, which pass a number of stability checks. Reducing one of the solutions to type IIA string theory, and T-dualizing to type IIB yields a product of a squashed Sasaki-Einstein manifold with an extremal BTZ black hole. Possible field theory interpretations are discussed.

@phdthesis{Tesileanu2011, image = {mypub_imgs_phd_thesis.png}, bibtex_show = {true}, author = {Tesileanu, Tiberiu}, number = {September}, school = {Princeton University}, title = {{Charged black holes and the AdS/CFT correspondence}}, year = {2011}, url = {https://ui.adsabs.harvard.edu/abs/2011PhDT.......103T/abstract}, pdf = {phdthesis.pdf} } -

Herzog, C.P., Klebanov, I.R., Pufu, S.S., and Tesileanu, T.Physical Review D Nov 2011

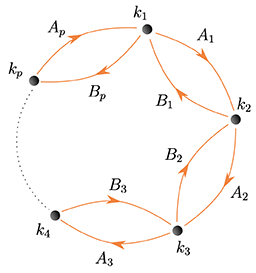

Herzog, C.P., Klebanov, I.R., Pufu, S.S., and Tesileanu, T.Physical Review D Nov 2011Localization methods reduce the path integrals in \(\mathcal N \ge 2\) supersymmetric Chern-Simons gauge theories on \(S^3\) to multi-matrix integrals. A recent evaluation of such a two-matrix integral for the \(\mathcal N=6\) superconformal \(\mathrm U(N) \times \mathrm U(N)\) ABJM theory produced detailed agreement with the AdS/CFT correspondence, explaining, in particular the \(N^{3/2}\) scaling of the free energy. We study a class of \(p\)-matrix integrals describing \(\mathcal N=3\) superconformal \(\mathrm U(N)^p\) Chern-Simons gauge theories. We present a simple method that allows us to evaluate the eigenvalue densities and the free energies in the large \(N\) limit keeping the Chern-Simons levels \(k_i\) fixed. The dual M-theory backgrounds are \(\mathrm {AdS}_4 \times Y\), where \(Y\) are seven-dimensional tri-Sasaki Einstein spaces specified by the \(k_i\). The gravitational free energy scales inversely with the square root of the volume of \(Y\). We find a general formula for the \(p\)-matrix free energies that agrees with the available results for volumes of the tri-Sasaki Einstein spaces \(Y\), thus providing a thorough test of the corresponding \(\mathrm {AdS}_4/\mathrm{CFT}_3\) dualities. This formula is consistent with the Seiberg duality conjectured for Chern-Simons gauge theories.

@article{Herzog2010, image = {mypub_imgs_strings_quiver.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {1011.5487}, author = {Herzog, Christopher P. and Klebanov, Igor R. and Pufu, Silviu S. and Tesileanu, Tiberiu}, doi = {10.1103/PhysRevD.83.046001}, eprint = {1011.5487}, journal = {Physical Review D}, month = nov, number = {046001}, title = {{Multi-Matrix Models and Tri-Sasaki Einstein Spaces}}, url = {https://journals.aps.org/prd/abstract/10.1103/PhysRevD.83.046001}, pdf = {https://arxiv.org/pdf/1011.5487.pdf}, volume = {83}, year = {2011} } -

Klebanov, I.R., Pufu, S.S., and Tesileanu, T.Physical Review D Apr 2010

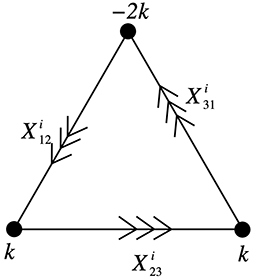

Klebanov, I.R., Pufu, S.S., and Tesileanu, T.Physical Review D Apr 2010If the second Betti number \(b_2\) of a Sasaki-Einstein manifold \(Y^7\) does not vanish, then M-theory on \(\mathrm {AdS}_4 \times Y^7\) possesses “topological” \(\mathrm U(1)^b_2\) gauge symmetry. The corresponding Abelian gauge fields come from three-form fluctuations with one index in \(\mathrm {AdS}_4\) and the other two in \(Y^7\). We find black membrane solutions carrying one of these \(\mathrm U(1)\) charges. In the zero temperature limit, our solutions interpolate between \(\mathrm {AdS_4} \times Y^7\) in the UV and \(\mathrm {AdS_2} \times \mathbb R^2 \times \text{squashed }Y^7\) in the IR. In fact, the \(\mathrm {AdS}_2 \times \mathbb R^2 \times \text{squashed }Y^7\) background is by itself a solution of the supergravity equations of motion. These solutions do not appear to preserve any supersymmetry. We search for their possible instabilities and do not find any. We also discuss the meaning of our charged membrane backgrounds in a dual quiver Chern-Simons gauge theory with a global \(\mathrm U(1)\) charge density. Finally, we present a simple analytic solution which has the same IR but different UV behavior. We reduce this solution to type IIA string theory, and perform T-duality to type IIB. The type IIB metric turns out to be a product of the squashed \(Y^7\) and the extremal BTZ black hole. We discuss an interpretation of this type IIB background in terms of the (1+1)-dimensional CFT on D3-branes partially wrapped over the squashed \(Y^7\).

@article{Klebanov2010, image = {mypub_imgs_top_charge.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {1004.0413}, author = {Klebanov, Igor R. and Pufu, Silviu S. and Tesileanu, Tiberiu}, eprint = {1004.0413}, journal = {Physical Review D}, month = apr, number = {125011}, title = {{Membranes with Topological Charge and AdS4/CFT3 Correspondence}}, url = {https://journals.aps.org/prd/abstract/10.1103/PhysRevD.81.125011}, pdf = {https://journals.aps.org/prd/pdf/10.1103/PhysRevD.81.125011}, volume = {81}, year = {2010} } -

Herzog, C.P., Klebanov, I.R., Pufu, S.S., and Tesileanu, T.JHEP Nov 2010

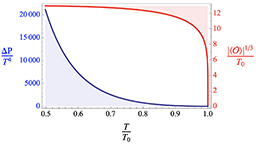

Herzog, C.P., Klebanov, I.R., Pufu, S.S., and Tesileanu, T.JHEP Nov 2010We find new black 3-brane solutions describing the “conifold gauge theory” at nonzero temperature and baryonic chemical potential. Of particular interest is the low-temperature limit where we find a new kind of weakly curved near-horizon geometry; it is a warped product \(\mathrm {AdS}_2 \times \mathbb R^3 \times T^{1,1}\) with warp factors that are powers of the logarithm of the AdS radius. Thus, our solution encodes a new type of emergent quantum near-criticality. We carry out some stability checks for our solutions. We also set up a consistent ansatz for baryonic black 2-branes of M-theory that are asymptotic to \(\mathrm {AdS}_4 \times Q^{1,1,1}\).

@article{Herzog2009, image = {mypub_imgs_qcrit.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {0911.0400}, author = {Herzog, Christopher P. and Klebanov, Igor R. and Pufu, Silviu S. and Tesileanu, Tiberiu}, doi = {10.1007/JHEP03(2010)093}, eprint = {0911.0400}, journal = {JHEP}, month = nov, number = {093}, title = {{Emergent Quantum Near-Criticality from Baryonic Black Branes}}, url = {https://link.springer.com/article/10.1007/JHEP03(2010)093}, pdf = {https://arxiv.org/pdf/0911.0400.pdf}, volume = {1003}, year = {2010} } -

Gubser, S.S., Herzog, C.P., Pufu, S.S., and Tesileanu, T.Physical Review Letters Jul 2009

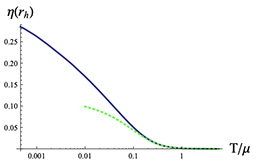

Gubser, S.S., Herzog, C.P., Pufu, S.S., and Tesileanu, T.Physical Review Letters Jul 2009We establish that in a large class of strongly coupled 3+1 dimensional \(\mathcal N=1\) quiver conformal field theories with gravity duals, adding a chemical potential for the \(R\)-charge leads to the existence of superfluid states in which a chiral primary operator of the schematic form \(\mathcal O = λλ^+ W\) condenses. Here \(λ\) is a gluino and \(W\) is the superpotential. Our argument is based on the construction of a consistent truncation of type IIB supergravity that includes a \(\mathrm U(1)\) gauge field and a complex scalar.

@article{Gubser2009, image = {mypub_imgs_supercond.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {0907.3510}, author = {Gubser, Steven S. and Herzog, Christopher P. and Pufu, Silviu S. and Tesileanu, Tiberiu}, doi = {10.1103/PhysRevLett.103.141601}, eprint = {0907.3510}, journal = {Physical Review Letters}, month = jul, number = {141601}, title = {{Superconductors from Superstrings}}, url = {https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.103.141601}, pdf = {https://journals.aps.org/prl/pdf/10.1103/PhysRevLett.103.141601}, volume = {103}, year = {2009} } -

Acosta-Kane, D., Acciarri, R., Amaize, O., Antonello, M., Baibussinov, B. et al.Nuclear Instruments and Methods in Physics Research, Section A: Accelerators, Spectrometers, Detectors and Associated Equipment Jul 2008

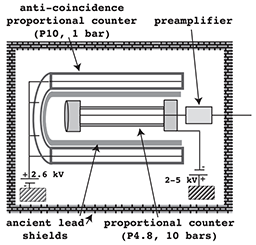

Acosta-Kane, D., Acciarri, R., Amaize, O., Antonello, M., Baibussinov, B. et al.Nuclear Instruments and Methods in Physics Research, Section A: Accelerators, Spectrometers, Detectors and Associated Equipment Jul 2008We report on the first measurement of 39Ar in argon from underground natural gas reservoirs. The gas stored in the US National Helium Reserve was found to contain a low level of 39Ar. The ratio of 39Ar to stable argon was measured to be ≤ 4 × 10-17 (84% C.L.), less than 5% the value in atmospheric argon (39Ar / Ar = 8 × 10-16). The total quantity of argon currently stored in the National Helium Reserve is estimated at 1000 tons. 39Ar represents one of the most important backgrounds in argon detectors for WIMP dark matter searches. The findings reported demonstrate the possibility of constructing large multi-ton argon detectors with low radioactivity suitable for WIMP dark matter searches.

@article{Acosta-Kane2008, image = {mypub_imgs_argon.png}, bibtex_show = {true}, author = {Acosta-Kane, D. and Acciarri, R. and Amaize, O. and Antonello, M. and Baibussinov, B. and {Baldo Ceolin}, M. and Ballentine, C.J. and Bansal, R. and Basgall, L. and Bazarko, A. and Benetti, P. and Benziger, J. and Burgers, A. and Calaprice, F. and Calligarich, E. and Cambiaghi, M. and Canci, N. and Carbonara, F. and Cassidy, M. and Cavanna, F. and Centro, S. and Chavarria, A. and Cheng, D. and Cocco, A.G. and Collon, P. and Dalnoki-Veress, F. and de Haas, E. and {Di Pompeo}, F. and Fiorillo, G. and Fitch, F. and Gallo, V. and Galbiati, C. and Gaull, M. and Gazzana, S. and Grandi, L. and Goretti, A. and Highfill, R. and Highfill, T. and Hohman, T. and Ianni, Al. and Ianni, An. and LaCava, A. and Laubenstein, M. and Lee, H.Y. and Leung, M. and Loer, B. and Loosli, H.H. and Lyons, B. and Marks, D. and McCarty, K. and Meng, G. and Montanari, C. and Mukhopadhyay, S. and Nelson, A. and Palamara, O. and Pandola, L. and Pietropaolo, F. and Pivonka, T. and Pocar, A. and Purtschert, R. and Rappoldi, A. and Raselli, G. and Resnati, F. and Robertson, D. and Roncadelli, M. and Rossella, M. and Rubbia, C. and Ruderman, J. and Saldanha, R. and Schmitt, C. and Scott, R. and Segreto, E. and Shirley, A. and Szelc, A.M. and Tartaglia, R. and Tesileanu, Tiberiu and Ventura, S. and Vignoli, C. and Visnjic, C. and Vondrasek, R. and Yushkov, A.}, doi = {10.1016/j.nima.2007.12.032}, journal = {Nuclear Instruments and Methods in Physics Research, Section A: Accelerators, Spectrometers, Detectors and Associated Equipment}, keywords = {Cryogenic noble gases,Dark matter,Low background detectors}, number = {1}, title = {{Discovery of underground argon with low level of radioactive 39Ar and possible applications to WIMP dark matter detectors}}, volume = {587}, year = {2008}, url = {https://iopscience.iop.org/article/10.1088/1742-6596/120/4/042015/meta}, pdf = {https://iopscience.iop.org/article/10.1088/1742-6596/120/4/042015/pdf} } -

Tesileanu, T., and Meyer-Ortmanns, H.International Journal of Modern Physics C Aug 2006

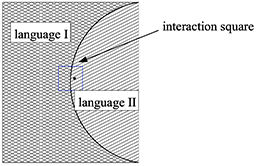

Tesileanu, T., and Meyer-Ortmanns, H.International Journal of Modern Physics C Aug 2006We consider the spreading and competition of languages that are spoken by a population of individuals. The individuals can change their mother tongue during their lifespan, pass on their language to their offspring and finally die. The languages are described by bitstrings, their mutual difference is expressed in terms of their Hamming distance. Language evolution is determined by mutation and adaptation rates. In particular we consider the case where the replacement of a language by another one is determined by their mutual Hamming distance. As a function of the mutation rate we find a sharp transition between a scenario with one dominant language and fragmentation into many language clusters. The transition is also reflected in the Hamming distance between the two languages with the largest and second to largest number of speakers. We also consider the case where the population is localized on a square lattice and the interaction of individuals is restricted to a certain geometrical domain. Here it is again the Hamming distance that plays an essential role in the final fate of a language of either surviving or being extinct.

@article{Tesileanu2005, image = {mypub_imgs_languages.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {physics/0508229}, author = {Tesileanu, Tiberiu and Meyer-Ortmanns, Hildegard}, doi = {10.1142/S0129183106008765}, eprint = {0508229}, journal = {International Journal of Modern Physics C}, month = aug, number = {3}, title = {{Competition of Languages and their Hamming Distance}}, url = {https://www.worldscientific.com/doi/abs/10.1142/S0129183106008765}, pdf = {https://arxiv.org/pdf/physics/0508229.pdf}, volume = {17}, year = {2006} } -

Helling, R.C., Schupp, P., and Tesileanu, T.Physical Review D Mar 2006

Helling, R.C., Schupp, P., and Tesileanu, T.Physical Review D Mar 2006A simple algorithm which gives the multipole vectors in terms of the roots of a polynomial is given. We find that the reported alignmet of the low \(\ell\) multipole vectors can be summarised as an anti-alignmet of these with the dipole direction. This anti-alignment is not only present in \(\ell=2\) and 3 but also for \(\ell=5\) and higher. This alignment is likely due to non-linearity in the data processing. Our results are based on the three year WMAP data, we also list corresponding results for the first year data.

@article{Helling2006, image = {mypub_imgs_dipole.png}, bibtex_show = {true}, archiveprefix = {arXiv}, arxivid = {astro-ph/0603594}, author = {Helling, Robert C. and Schupp, Peter and Tesileanu, Tiberiu}, doi = {10.1103/PhysRevD.74.063004}, eprint = {0603594}, journal = {Physical Review D}, month = mar, number = {063004}, title = {{CMB statistical anisotropy, multipole vectors and the influence of the dipole}}, url = {https://journals.aps.org/prd/abstract/10.1103/PhysRevD.74.063004}, pdf = {https://journals.aps.org/prd/pdf/10.1103/PhysRevD.74.063004}, volume = {74}, year = {2006} }